Can AI Make Science More Trustworthy?

Paper Title: From complexity to clarity: How AI enhances perceptions of scientists and the public’s understanding of science

Author(s) and Year: David M. Markowitz (2024)

Journal: PNAS Nexus (open access)

TL;DR: AI-generated plain-language summaries help readers better understand scientific research and make them view scientists as more trustworthy and credible.

Why I chose this paper: As someone fascinated by how language shapes public trust in science, I was drawn to this study for its timely question: can AI not only simplify research papers but also strengthen how people perceive the scientists behind them?

The Background

AI as a Collaborator

What if artificial intelligence (AI) didn’t replace science communicators, but worked like a first draft partner – producing clearer summaries that scientists and editors could refine?

That’s the premise behind a study led by David Markowitz. He asked whether AI could help translate dense scientific papers into readable, plain-language summaries and whether that could help people understand the science they read as well as shape how they view scientists themselves.

Every scientific paper begins with an abstract – a tightly packed paragraph that distills months or years of work into a few sentences. For non-specialists, abstracts can feel like encrypted messages. Even “significance statements”, designed to make research more accessible, often remain heavy with jargon.

Markowitz wanted to know: Could AI make science easier to understand without sacrificing accuracy? And if so, could that improve trust in scientists?

Their results suggest that the answer is yes. Readers not only understood AI-generated summaries better, but they also viewed the scientists (whom they believed wrote them) more positively.

The Research Question

Make it Make Sense

Science communication has struggled with balancing accuracy and accessibility. Technical language can alienate readers. At the same time, large-scale translation of scientific work into plain language requires time, skill and resources that scientists may lack. Altogether, these factors shape public attitudes towards science, which unfortunately, are not always positive.

This is related to a psychological phenomenon called the fluency heuristic, where information that is easier to understand is interpreted as more accurate and ultimately, more memorable. Therefore, when writing is more effortlessly read, we’re more inclined to trust it. Markowitz set out to test whether AI could harness that fluency to support understanding and trust without sacrificing accuracy.

The Methods

The Big Questions

The researcher designed his study around three central questions:

- Are AI-generated summaries linguistically simpler than human-written ones?

- Do simpler summaries improve readers’ comprehension and perceptions of scientists?

- Can AI-written text maintain scientific accuracy while increasing clarity?

To answer these questions, he conducted three studies comparing traditional scientific abstracts, human-written summaries, and AI-generated (produced by GPT-4) summaries from papers published in the journal, Proceedings of the National Academy of Sciences (PNAS).

First, text analysis tools were used to measure how simple or readable each of the three summary types were based on sentence structure and vocabulary. Then, experiments were conducted where participants read human- or AI-generated summaries and were asked to identify the author (human or AI), as well as rate the author on traits like credibility, intelligence, and trustworthiness. Finally, these participants were tested on their understanding of these summaries through a multiple-choice quiz and a free-response question briefly explaining the research described.

The Results

Simpler Writing Translates to Stronger Impact

Across these analyses, AI-generated summaries were consistently simpler than both traditional abstracts and human-written lay summaries. AI used shorter sentences and more common vocabulary compared to human authors, making them roughly twice as linguistically readable. That clarity shaped perception. When participants read summaries without knowing who (or what) wrote them, those who received AI summaries rated the scientists as more credible and trustworthy, though slightly less intelligent. Many readers also assumed that the clearer writing was made by a person. Clarity also improved comprehension. Participants who read AI summaries scored higher on quizzes and produced more accurate explanations for the free-response question. Simpler language didn’t just change how scientists were perceived but it also measurably improved understanding and recall of the material.

The Impact

AI-Assisted Communication can Build Trust

These findings suggest that AI could become a powerful tool in science communication. Journals and scientists might use AI tools to generate accessible summaries quickly and at scale, narrowing the language gap that separates researchers from connecting with the public.

Clearer writing appears to foster trust. When readers can understand a study, they’re more likely to see scientists as transparent and credible.

Crucially, this work reframes AI not as a replacement for human communicators but as a collaborator, specifically a first draft generator. Scientists and editors still play an essential role in verifying accuracy, restoring nuance and maintaining ethical transparency. For example, human oversight is required to prevent oversimplification of scientific concepts and disclosure about AI involvement is necessary to maintain transparency.

The takeaway is simple: readability is not optional but foundational. If AI tools like GPT-4 can lower linguistic barriers while communicators safeguard rigor and ethics, we can find new ways to bring audiences closer to science and the people behind it.

—

Written by Anika Zaman

Edited by Brita Kilburg-Basnyat and Crystal Hrelic Colón

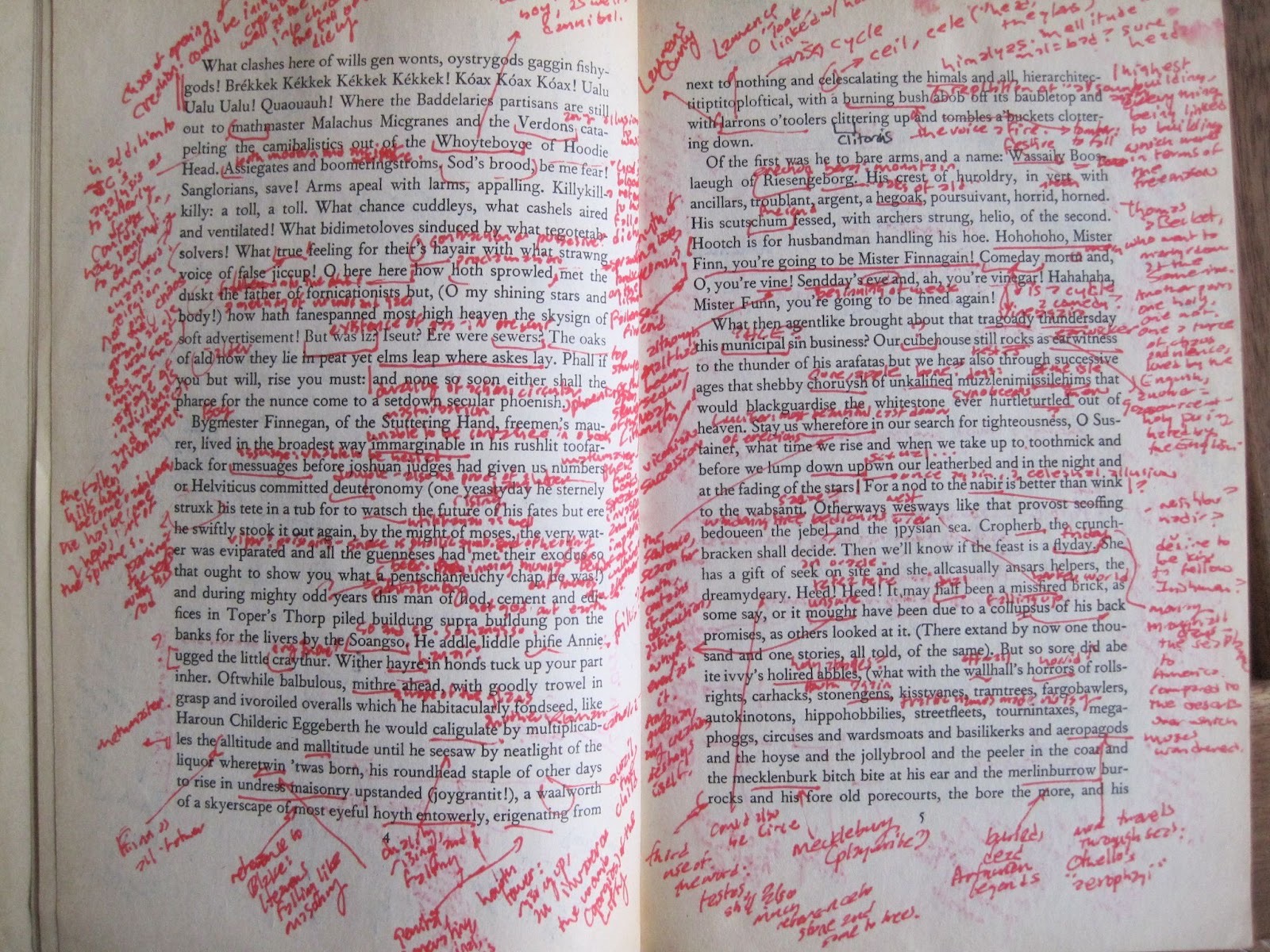

Featured image credit: Karl Steel via Flickr, licensed under CC BY-SA 2.0.